![Exchange-2010-Logo-733341[1]](https://eightwone.com/wp-content/uploads/2009/11/exchange-2010-logo-7333411.png?w=150&h=71) An article on SearchExchange by Greg Shields, MVP Remote Desktop Services, VMWare vExpert and a well-known writer and speaker, covered Greg’s annoyances with Exchange Server 2010. I doubt these points will be in the top 5 annoyances of the common Exchange 2010 administrator, apart from some arguments being flawed. Here’s why.

An article on SearchExchange by Greg Shields, MVP Remote Desktop Services, VMWare vExpert and a well-known writer and speaker, covered Greg’s annoyances with Exchange Server 2010. I doubt these points will be in the top 5 annoyances of the common Exchange 2010 administrator, apart from some arguments being flawed. Here’s why.

Role Bases Access Control Management

First, the article complains about the complexity of Exchange 2010’s Role Based Access Control system, or RBAC for short. It’s clear what RBACs purpose is, managing the security of your Exchange environment using elements like roles, groups, scopes and memberships (for more details, check out one of my earlier posts on RBAC here). RBAC was introduced with Exchange 2010 to provide organizations more granular control when compared to earlier versions of Exchange, where you had manage security using a (limited) set of groups and Active Directory. In smaller organizations the default setup may – with a little modification here and there – suffice.

For other, larger organizations this will enable them to fine-tune the security model to their business demands. And yes, this may get very complex.

Is this annoying? Is it bad that it can’t be managed from the GUI in all its facets? I think not for two reasons. First is that Exchange administrators should familiarize themselves with PowerShell anyway; it is here to stay and the scripting language of choice for recent Microsoft product releases. Second is that, in most cases, setting RBAC up will be a one-time exercise. Thinking it through and setting it up properly is just one of the aspects of configuring the Exchange 2010 environment. Also, I’ve seen pre-Exchange 2010 organizations with delegated permission models that also took a significant amount of time to fully comprehend, beating the authors “20 minute test” easily.

DAGs three server minimum

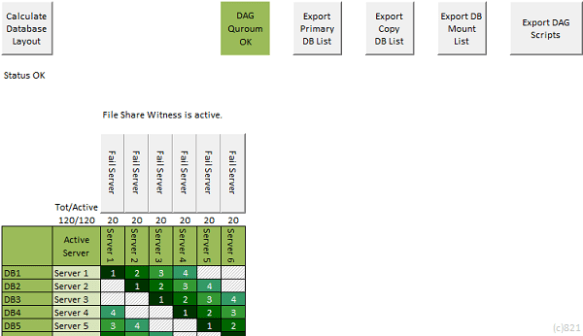

The article talks about the requirement to put the 3rd copy in a Database Availability Group (DAG) on a “witness server” in a separate site to get the best level of high availability. It looks like the author mixed things up as there’s no such thing as a “witness server” nor a requirement to host anything as such in a separate site. In DAG, there’s a witness share (File Share Witness or FSW for short) which is used to determine majority for DAG configurations with an even number of members. This share is hosted on a member server, preferably as part of the e-mail infrastructure, e.g. a Hub Transport server, located in the same site as the (largest part of) population resides.

Also, there’s no requirement for a 3rd DAG member when high availability is required. The 3rd DAG member is a Microsoft recommendation when using JBOD storage. For disaster recovery a remote 3rd DAG member could make sense, but then you wouldn’t require a “witness server” given the odd number of DAG members.

Note is that there’s a difference between high availability and disaster recovery. Having multiple copies in the same site is to offer high availability; having remote copies is to provide resilience. More information on DAGs and the role of the File Share Witness in my Datacenter Activation Coordination article here.

Server Virtualization and DAGs

Next, the article continues that Exchange 2010 DAGs don’t support high availability options provided by the virtualization platform, which is spot on. Microsoft and VMWare have been squabbling for some time over DAGs in combination of with VMWare’s HA/DRS options, leading to the mentioned support statement from Microsoft. VMWare did their part by putting statements like “VMware does not currently support VMware VMotion or VMware DRS for Microsoft Cluster nodes“; what doesn’t help is putting this in the best practices guide as a side note on page 64. More recently, VMWare published a support table for VMWare HA/DRS and Exchange 2010 indicating a “YES” for VMWare HA/DRS in combination with Exchange 2010 DAG. I hope that was a mistake.

In the end, I doubt if DAGs being non-supported in conjunction with VMWare HA/DRS (or similar products from other vendors) will be a potential deal breaker, like the author states. That might be true for organizations already utilizing those options as part of their strategy. In that case it would come down to evaluating running Exchange DAGs without those options (which it happily will). Not only will that offer organizations Exchange’s availability and resilience options with a much greater flexibility and function set than a non-application aware virtualization platform would, it also saves you some bucks in the process as well. For example, where VMWare can recover from data center or server failures, DAGs can also recover from database failures and several forms of corruption.

Exchange 2010 routing

The article then continues with Exchange 2010 following Active Directory sites for routing. While this is true, this isn’t something new. With the arrival Exchange 2007, routing groups and routing group connectors were traded for AD sites to manage routing of messages.

The writers annoyance here is that Exchange must be organized to follow AD site structure. Is that bad? I think not. Of course, with Exchange 2003 organizations could skip defining AD sites so they should (re)think their site structure anyway since more and more products use AD site information. I also think organizations that haven’t designed an appropriate AD site structure following recommendations may have issues bigger than Exchange. In addition, other products like System Center also rely on a prope AD site design.

Also, when required organizations can control message flow in Exchange using hub sites or connector scoping for instance. It is also possible to override site link costs for Exchange. While not all organizations will utilize these settings, they will address most needs for organizations. Also, by being site-aware, Exchange 2010 can offer functionality not found in Exchange 2003, e.g. autodiscover or CAS server/CAS Arrays having site-affinity.

Ultimately, it is possible to set up a separate Exchange forest. By using a separate forest with a different site structure, organizations can isolate directory and Exchange traffic to route it through different channels.

CAS High Availability complicated?

The last annoyance mentioned in the article is about the lack of wizards to configure CAS HA features, e.g. to configure a CAS array with network load balancing like the DAG wizard installs and configures fail-over clustering for you. While true, I don’t see this as an issue. While setting up NLB was not too complex and fit for small businesses, nowadays Microsoft recommends using a hardware load balancer, making NLB of less importance. And while wizards are nice, most steps should be performed as part of a (semi)automated procedure, e.g. reconfiguring after a fail/switch-over. This procedure or script can be tested properly, making it less prone to error.

The article also finds network load balancing and Windows fail-over clustering being mutually exclusive an annoyance. Given that hardware load balancing is recommended and cost effective, supported appliances became available this restriction is becoming a non- issue.

More information on configuring CAS arrays here and details on NLB with clustering here.

Final words

Now don’t get the impression I want to condemn Greg for sharing his annoyances with us. But when reading the article I couldn’t resist responding on some inaccuracies, sharing my views in the process. Most important is that we learn from each other while discussing our perspectives and views on the matter. Having said that, you’re invited to comment or share your opinions in the comments below.

With the end of TechEd NA 2011, so ends a week of interesting sessions. Here’s a quick overview of recorded Exchange-related sessions for your enjoyment:

With the end of TechEd NA 2011, so ends a week of interesting sessions. Here’s a quick overview of recorded Exchange-related sessions for your enjoyment:

![Exchange-2010-Logo-733341[1]](https://eightwone.com/wp-content/uploads/2009/11/exchange-2010-logo-7333411.png?w=150&h=71)